A framework for deploying AI where it actually creates value

A note on terms: This framework is specifically about agentic AI. It is worth being clear about what that means and what it doesn’t.

Most people’s direct experience of AI is with tools: a chatbot that drafts text, a copilot that suggests code, a summarisation feature that condenses a meeting. These are genuinely useful. They augment individual cognition and make people faster. But the output is always inert until a human acts on it. The AI produces something. The person decides what to do with it.

Agentic AI is different in kind. The clue is in the name: you are giving AI agency. The ability to perceive a situation, decide what to do, and act, without a human approving each step. An agent can monitor signals, evaluate options, execute tasks across multiple systems, and initiate new actions based on what it finds. It isn’t a tool a person uses. It is an actor operating on behalf of the organisation within boundaries that the organisation defines.

That distinction matters because it changes everything about how deployment should be approached, which is what this framework addresses.

How this differs from RPA

Robotic process automation was the previous wave of process automation, and many organisations spent significant time and money on it. The comparison is instructive.

RPA automates steps in a process where the rules are fully known, the inputs are structured, and the environment is controlled. An RPA bot follows a precise script. It is fast and consistent when conditions match what it was designed for. It breaks the moment something unexpected happens — an unrecognised input format, an exception case, a process change upstream. RPA is a workflow tool built for deterministic environments.

Agentic AI is designed for the opposite situation. It operates where inputs are variable, information is incomplete, and judgment is required. It handles exceptions rather than failing on them. Where RPA requires a human to have already solved the problem and translated the solution into explicit rules, an agent can work through a situation that hasn’t been fully pre-specified.

The risk of conflating the two is significant. Organisations that mentally file agentic AI under “another automation wave” will deploy it where RPA already works — structured, back-office, deterministic — and evaluate it against RPA metrics. They will miss the value proposition entirely, which is operating in the judgment-heavy processes that RPA could never reach.

What the two approaches share is this: neither works without process understanding. The difference is that RPA requires exhaustive upfront specification of every rule and exception, while agentic AI requires clarity about decision boundaries, available signals, and acceptable outcomes. The homework is different in form but equally non-negotiable in practice.

Why current AI investment is structurally designed to underperform

Most organisations aren’t failing at AI because the technology is insufficient. They are failing because of where they choose to deploy it and because their own structure makes it nearly impossible to choose differently.

The processes where agentic AI could move the needle (pricing, sales performance, customer acquisition, retention) are precisely the ones organisations avoid. Investment concentrates instead where stakes are low and, not coincidentally, where value is also low: invoice processing, meeting summarisation, internal helpdesks. The logic is understandable. Low-stakes processes are easier to govern and easier to justify. But performance is then measured against the strategic ambition that justified the investment. The gap is predictable. The conclusion that AI is overhyped is wrong. The investment went to the wrong place.

The deeper problem is structural. Deploying agentic AI into high-leverage processes requires cross-functional agreement on objectives, success metrics, and accountability. In most organisations, that agreement doesn’t exist at the required level of specificity. The CMO optimises for growth. The CFO optimises for predictability. The CIO optimises for control. Each is individually rational. But when a decision must be made about where AI goes, what success looks like, and who bears the downside, these objectives pull in different directions. The initiatives that survive are the ones nobody objects to, which is to say, the back office.

This isn’t the innovator’s dilemma. That describes companies disrupted while serving their best customers well. This is different: organisations that have decided they want a technology but whose own governance structures and incentive misalignments prevent them from deploying it where it matters. The barrier isn’t capability. It is architecture. A quieter dynamic reinforces this further: when agentic AI exposes that activities considered strategic are actually repeatable operational patterns, the people whose authority rests on that perceived expertise become adversaries of adoption. Investment drifts toward functions where no one’s role is threatened.

This is the starting condition. Changing it requires being more precise about where agentic AI actually belongs.

Most organisations are looking for AI opportunities in the wrong places

The real opportunity for agentic AI isn’t in automating what computers already do well, nor in replacing judgment at the highest levels. It sits in a large middle ground that most organisations are systematically ignoring.

Three categories of work determine where agentic AI creates value. Calculation covers deterministic execution where rules are known, and outputs are verifiable: financial close, payroll, transaction processing. Software already dominates here. Strategic judgment covers decisions shaped by values, risk appetite, and long-term accountability. Agentic AI can inform these, but delegation is inappropriate; responsibility cannot be transferred to a probabilistic system. Between the two sits operational judgment: bounded decisions made repeatedly under incomplete information. Sales qualification, pricing adjustments, churn intervention, supply chain exceptions. This is where agentic AI has the largest untapped potential and it can play both roles here, supporting human decisions and, where boundaries are well defined, taking action autonomously.

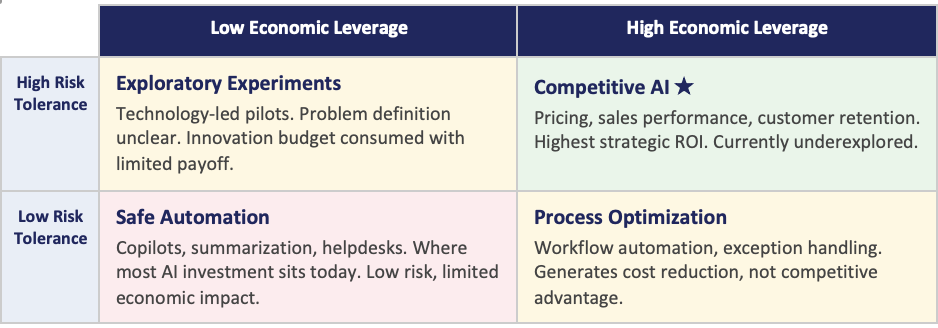

Most current AI deployment maps onto the wrong quadrant. Plotting initiatives along two axes, organisational risk tolerance and economic leverage, reveals the same pattern everywhere: investment clusters in low-risk, low-leverage territory. Safe automation, modest efficiency gains. The high-leverage quadrant, where agentic AI could influence pricing, sales outcomes, and customer retention, remains largely unexplored. The technology to operate there exists. What is missing is the organisational willingness to go there.

Knowing where the opportunity lies is necessary but not sufficient. There is an obstacle most AI frameworks never surface and it sits inside the organisation itself.

The obstacle nobody talks about: You may not know your processes

The most underestimated barrier to meaningful agentic AI adoption is that organisations frequently attempt to automate processes they do not fully understand.

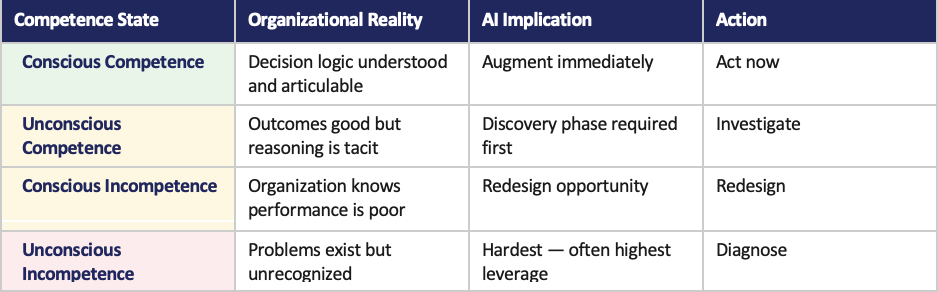

Experienced sales managers, pricing specialists, and customer success teams often perform well without being able to articulate why. Their effectiveness relies on tacit knowledge, pattern recognition, and informal signals rarely captured in formal systems. This is how expertise works. It becomes a problem when organisations treat these processes as understood simply because they are familiar. A four-stage competence model clarifies what is at stake.

Automating unconscious competence without first externalising it produces agentic AI built on an incomplete model of the work. It underperforms, and the wrong conclusion is drawn. The problem wasn’t the technology. The organisation simply didn’t know its own processes well enough.

One further boundary matters. Organisations only control their side of the value stream. In any customer journey, some decisions belong to the firm and some belong to the customer. Agentic AI can only act on the former. In customer-facing processes, the goal isn’t automation but optimising the quality and timing of influence, which is inherently probabilistic. This also opens a distinct opportunity: modeling customer behaviour at an individual level, replacing blunt segmentation with continuous signals that inform when and how the firm acts.

With this diagnostic picture in place, a clear adoption sequence emerges. One that starts from understanding, not from technology.

A six-step path that starts with understanding before automating

The right sequence moves from economic priority to decision mapping to process understanding to gradual delegation, and in that order.

- Identify high-leverage value streams. Start with what is economically important, not what is easiest to automate. This single discipline eliminates most low-ROI initiatives before they begin.

- Map the decision architecture. Within each value stream, identify where decisions occur, what signals drive them, which decisions the firm controls, and which belong to customers or partners. This isn’t traditional process mapping — it is a map of where judgment is exercised and what it draws on.

- Classify process competence. Determine whether decision logic is explicit or tacit. Explicit processes can be augmented immediately. Tacit processes require a discovery phase first. Skipping this step is where most initiatives go wrong.

- Externalise tacit knowledge. Where unconscious competence exists, surface it deliberately: analyse historical decisions, mine communications and CRM data, use AI as a sparring partner to challenge practitioner reasoning. The goal is to convert tacit heuristics into signals explicit enough to inform a system.

- Introduce agentic AI augmentation. With decision logic partially explicit, deploy agents as decision partners — interpreting signals, generating recommendations, surfacing exceptions, and, where boundaries permit, taking action. Humans remain accountable while the organisation learns how agents actually perform under real conditions.

- Delegate bounded decisions. Once confidence is established, delegate specific operational decisions to agents under defined policies, escalation thresholds, and active monitoring. Delegation with explicit boundaries, not abdication.

This sequence only works when applied to a contained, meaningful scope. That is what the next principle addresses.

Pick one value stream and make it work end-to-end

The most effective way to apply this framework is to resist broad deployment and instead make one economically meaningful value stream work completely before scaling.

Partial automation creates shifting bottlenecks. Accelerating one step increases its throughput without increasing downstream capacity, the constraint moves rather than disappears. Improving lead qualification while proposal creation and legal review remain unchanged doesn’t shorten the sales cycle. A vertical slice forces attention on the entire flow and produces system-level improvement rather than local gains that vanish at the next handoff.

A complete slice also forces simultaneous resolution of all three readiness conditions (organisational, human, and technical) within the same scope. This compression surfaces real constraints early, when they are cheaper to fix. The output isn’t just a working agentic AI capability. It is a template, a set of tested assumptions, and an organisational confidence that transfers to the next value stream.

The right slice has three characteristics: high economic leverage, frequent decision loops, and clear firm ownership of the decisions involved.

None of this holds without the foundational work most organisations skip. That is the subject of the final section.

The prerequisites cannot be skipped — Only deferred at greater cost

Skipping the three conditions for successful agentic AI deployment doesn’t eliminate the cost. It moves it downstream, where it is harder and more expensive to fix.

Understand the work before automating it

Map the decision architecture: where decisions occur, what signals drive them, which the firm controls, and whether the logic is explicit or tacit. The failure mode here isn’t immediate; it is that the organisation automates a misunderstood process, the system underperforms, and the wrong lesson is drawn. Beyond avoiding failure, this condition is where risk and reward become quantifiable. Without a clear decision map, the organisation cannot identify which decisions carry the most economic leverage, where error tolerance is lowest, or where agent autonomy should stop. This isn’t diligence for its own sake, it is how you price what you are investing in.

Assign accountability before deployment, not after

Who owns the outcome when an agent gets something wrong? If that question cannot be answered, the initiative will stall — not for technical reasons, but because unassigned accountability generates governance friction. Edge cases accumulate. Progress slows to the pace of the most cautious stakeholder. The deeper cost is structural: without clear ownership, individually rational behaviour produces collectively irrational outcomes. Each function defends its position. The initiative survives only in the form nobody opposes. Define accountability, performance measures, and how roles evolve before deployment. Organisations that treat this as a change management afterthought find it is actually a precondition.

Build data infrastructure around how decisions are actually made

This condition should come last — the technical layer cannot be specified until the organisational and human questions are resolved. The failure mode is building infrastructure around formal processes rather than real ones. If decisions rely on signals in email threads, informal conversations, or experienced intuition, a data architecture built on CRM fields and transaction logs will miss them. The agent trains on the documented process. The documented process isn’t the actual process. The result is an agent that is confidently wrong — optimised for how work was supposed to happen, not how it does.

The organisations that gain lasting advantage from agentic AI aren’t those with the most sophisticated models. They are the ones who knew themselves well enough to deploy those models where they would work.

Preparation isn’t the opposite of speed. It is what makes speed sustainable.

A note for those who’ve been here before

Organisations that lived through RPA programs are better prepared for this than they may realise, and better prepared than those who haven’t.

If those programs succeeded, the process understanding, governance models, and change management experience they generated are directly transferable. The competence built isn’t obsolete. It is a foundation. The question isn’t whether to start again from scratch, but how to extend what is already known into territory that was previously unreachable.

If those programs struggled — and many did, precisely because the prerequisites were underestimated — the lessons are equally valuable. The gaps that caused difficulty then are the same gaps this framework asks organisations to address now: insufficient process clarity, accountability that was never properly assigned, and data that described how work was supposed to happen rather than how it did.

In either case, the people who carry that experience are among the most important voices in any agentic AI program. They have already paid the tuition. The framework has a name for what they have: conscious competence, or at minimum, a clear-eyed view of what becoming consciously competent would require.

That is exactly where the work should start.

Where to start: Three steps to take now

The framework describes a complete adoption logic. But no organisation acts on a complete framework all at once. These three steps are designed to create early momentum without requiring the full program to be in place first.

- Pick one value stream and audit how well you actually understand it. Choose a process that is economically significant, one where better decisions would visibly move revenue or cost. Then ask, honestly, whether the decision logic within that process is explicit or tacit. Can the people who perform it well explain how they do it? Is the reasoning captured anywhere? This audit doesn’t require a large team or a long timeline. It requires the right conversation with the right practitioners. What it produces is clarity about whether you are ready to augment, or whether a discovery phase needs to come first.

- Map the accountability gap before touching the technology. For the same value stream, identify every decision point and ask who currently owns each one and who would own it if an agent were involved. Where the answer is unclear, that is where the initiative will stall. Resolving accountability in advance isn’t a governance exercise. It is the precondition for moving at all. This conversation also tends to surface the political dynamics early, when they are easier to navigate than after a deployment is already underway.

- Start the data conversation with a different question. Rather than asking what data you have, ask what signals your best people actually use when making the decisions in your chosen value stream. Then ask whether those signals are captured anywhere. The gap between those two answers is your real data readiness problem and it is almost always more specific and more addressable than a general data quality discussion. Closing that gap, even partially, is what makes the difference between an agent built on a real model of the work and one built on the documentation of how the work was supposed to happen.

These three steps don’t require a program. They require a conversation, a decision, and an honest audit. The organisations that begin there are the ones that eventually scale. The ones that begin with the technology are the ones that end up wondering why it didn’t work.